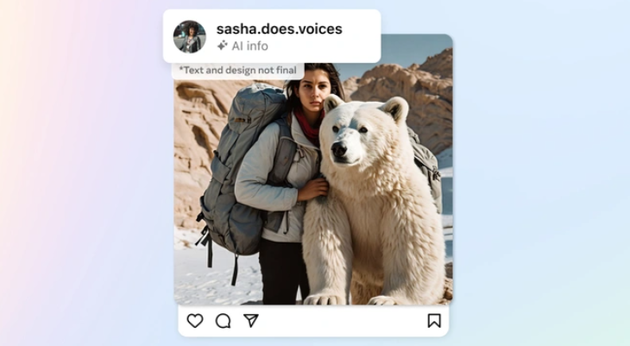

Meta will label content created with the help of artificial intelligence on its Facebook, Instagram and Threads platforms. This should provide more transparency, and hopefully fewer social media outbursts like we saw last week with Taylor Swift.

AI content

Last year was the year that AI really took off: one AI chatbot after another was introduced and everyone has wondered what this could possibly mean for their job. Both in a positive and negative sense. However, many issues have also arisen for tech companies and governments, including with a view to spreading fake news. Or actually fake images: fake pictures that stick images on top of each other. We recently saw it happen with Taylor Swift and it is often difficult to distinguish it from the real thing.

We now receive help with this in the form of a label. When Facebook, Instagram and Threads see that an image was created by an AI generator, it is given a label. Meta late know: “As the difference between human and synthetic content becomes blurred, people want to know where the line is. People are often encountering AI-generated content for the first time, and our users have told us they appreciate transparency around this new technology. So it’s important that we let people know when photorealistic content they see has been created using AI. We do this by applying “Imagined with AI” labels to photorealistic images created with our Meta AI feature, but we also want to be able to do this with content created with tools from other companies.”

Meta wants you to be honest

So if you create an AI image with Google Bard, Microsoft, Adobe, Shutterstock or Midjourney, it will not go unnoticed by Meta’s software. This not only concerns photos, but also videos made with generative AI, something we don’t see very often yet, but which will probably play an increasingly important role this year. Meta does expect people to indicate that they have used AI to create the content, because it is not always easy to recognize. If you use AI without reporting it, Meta will take measures. It also wants to prevent people from removing watermarks, because that is now possible, just like the metadata.

It is clear that Meta is also still trying to figure out how to make it really clear when generative AI is used, because it is simply so difficult to recognize. But while it is working on its Meta AI, it will hopefully learn more and more about this so that it can ‘catch’ most AI creations. Using AI to create content is not a bad thing, but it is good to label that this is the case. And speaking of which, do you think the robot at the top of this article is AI, or is it a regular image? Let us be transparent: in this case we have opted for AI, because it fits perfectly. Now another label.